AI’s contributions to political polarization

Echo chambers arise from social media algorithms

Fujomedia

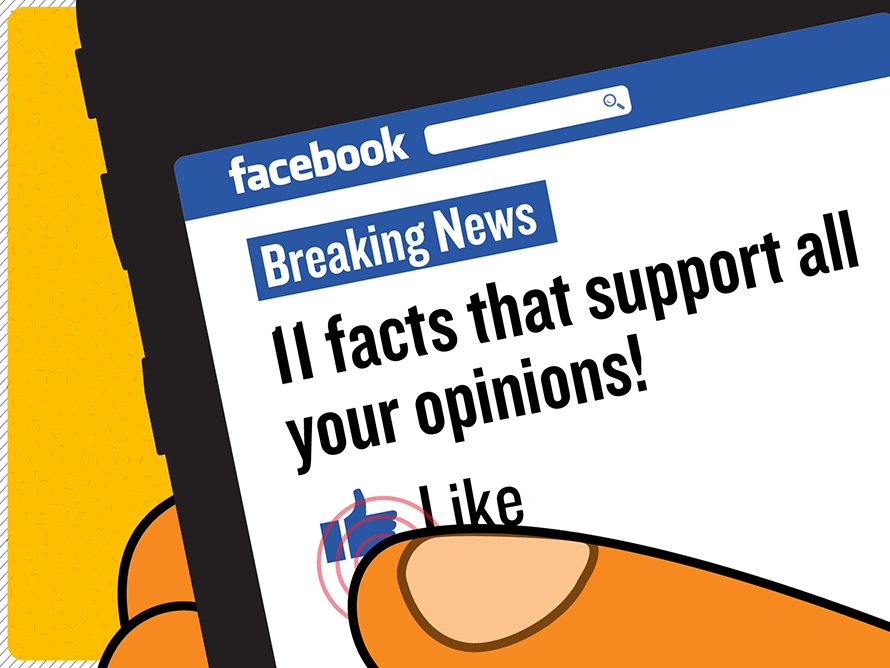

Algorithms on sites like Facebook and TikTok radicalize its users by constantly suggesting the same types of politically charged posts to users

You know when your siblings tell your parents a one-sided story which very conveniently doesn’t mention any part of them being rude to you? Did you know that this is happening on our phones everyday?

The algorithm that AI can use to generate a feed tailored to your interest on social media has proven to create bias/polarization in viewpoints, especially political ones. This is described as an “echo chamber” which is where an internet user will solely encounter opinions and news that reinforce their own beliefs and ideas. The rise of artificial intelligence has created a positive impact on our society, but there are some negatives; this echo chamber is one of them.

Artificial intelligence shows us what it believes we want to see, whether that be an ad for a website you’ve visited frequently or showing you a video from a political viewpoint it believes you would like to see. It does this because, honestly, who would want to see a video about something they don’t agree with?

The application TikTok is a great example of the political bias implemented into social media. Artificial intelligence can amplify one’s political views by straining through a pool of videos and sharing content of ones political interest. This influences ones views to become more extreme as they are, solely consuming their “side” of the story.

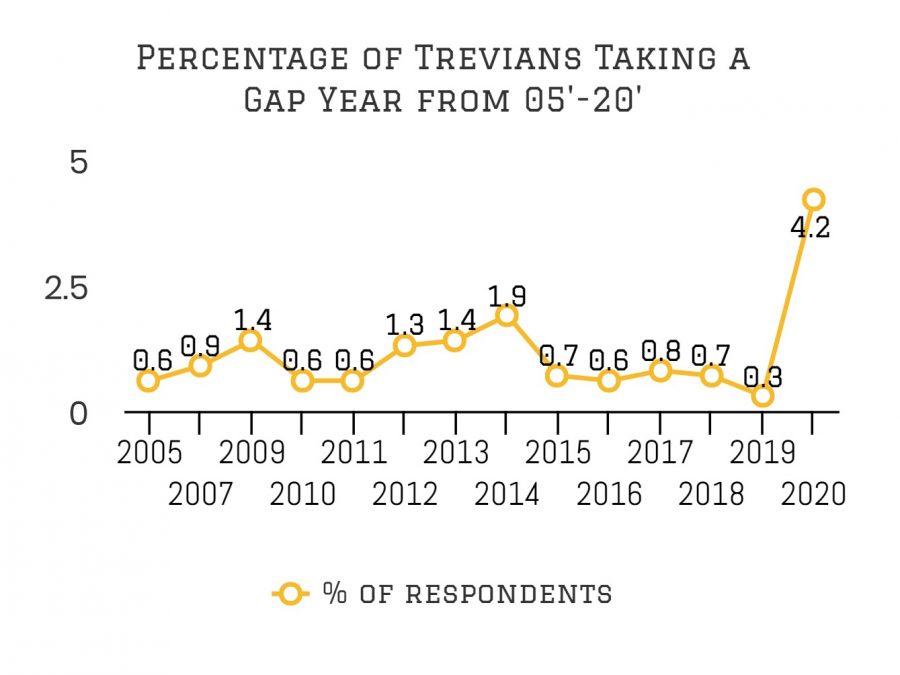

To be clear, this bias happens with many different political views, and therefore increases political polarization because users are only seeing what they want to see regardless of if it is the full story. Research conducted by Pew Research Center observed a large ideological divide in political ideals from the past decade because of this.

According to research conducted by Brown University, the increase in political polarization has been caused by partisan news channels – which do something along the lines of an echo chamber where they only display the views of one party and what they want to see. Along with this trend, the research also concluded that countries seeing the divide have also had a dramatic increase in use of the internet, which could represent a correlation.

Research conducted by Brookings added that individuals who stay off social media tended to harbor less extreme political views compared to someone who consistently uses social media and experiences the algorithms of AI.

Large social media platforms like Facebook have attempted to address the issue by tweaking and working against the algorithm to do the opposite of consistently showing someone their preferred videos.

Brookings stated that, “[Facebook] does extensive internal research on the polarization problem and periodically adjusts its algorithms to reduce the flow of content likely to stoke political extremism and hatred.”

However, a study conducted by Ro’ee Levy with over 17,000 Americans found that Facebook’s “content-ranking algorithm” limits the consumers’ exposure to contrary viewpoints. This consequently increases political polarization because the consumer doesn’t hear about or see any viewpoint but their own.

Authors from Proceedings of the National Academy of Sciences of the United States of America shared the correlation between social media algorithms (AI) and echo chambers.

“Social media may limit the exposure to diverse perspectives and favor the formation of groups of like-minded users framing and reinforcing a shared narrative, that is, echo chambers.”

Everyone who uses social media falls target to the echo chambers because of the constant evolution and utilization of artificial intelligence. In order to not become a victim of these echo chambers, you can extensively research topics which you are exposed to on social media using neutral news outlets. However, limiting social media use is the best solution.